Ogres Have Layers.

So Does AI in Education.

Your provost, your CIO, and your curriculum committee are all asking each other about "AI in education." They are talking about four different things. This activity is a short walk through what those four things are, why mixing them up costs institutions money and credibility, and what changes the next time a student asks you "can I use AI for this?" Drawn from Chapter 5 of The Learn-It-All Educator.

Twelve steps. One framework. Click through at your own pace. The print version at the end captures the entire framework on letter-size paper for distribution to your department.

Meet the 1980s instructor.

An excellent instructor who left industry in 1985 and has taught continuously since. Four decades in the classroom. Award winner. Genuinely cares about his students. And then AI arrives.

He learns how large language models work. He understands probability engines, bias, and hallucination. He uses AI to draft lesson plans, auto-generate quiz banks, and format his LMS pages. He redesigns his assignments so students must collaborate with AI, verify its output, and develop editorial judgment over AI-generated text.

He has done everything right.

"And he has still completely missed an entire category. He was so preoccupied with AI's impact on his own job that he had little time to experience the impact on the workplace. He has not practiced in industry for forty years, but the last three years is what students need to know about. His teaching is excellent. His field currency may not be."

The last three years he never lived through.

He does not know that AI is automating the supply chain decisions his business students will encounter at their first job. He does not know that contract review software is transforming the legal field his paralegal students are preparing to enter. He does not know that clinical decision-support systems are being deployed in the nursing facilities where his health science students will work.

This is not a failure of effort or intelligence. It is a structural gap. Medical schools do not have it because clinical faculty are active practitioners. But a community college business instructor teaching the same supply chain course for fifteen years? A polytechnic's paralegal program staffed by career academics? The gap is real, and it is growing.

If you read Chapter 4, you recognize this instructor. He is not a know-it-all resisting change. He is a genuine learn-it-all who has embraced every framework in this book. The problem is that no amount of Intelligent Simpleton humility fixes a structural information deficit. He cannot teach what he does not know his students need. And our institutional frameworks gave him no vocabulary to name what was missing.

The AAMC saw this problem clearly.

In January 2025, the Association of American Medical Colleges published principles that split the concept into two categories.

AI in medicine

Clinical application. A physician using AI to read a CT scan. A predictive model flagging sepsis risk. An ambient scribe documenting a patient encounter. AI embedded in the practice of medicine.

AI for medicine

Institutional machinery. AI screening residency applications. Generating practice questions. Summarizing student evaluations. AI as operational infrastructure, the business of running a medical school.

The AAMC's implicit logic is worth stating plainly: AI for medicine is a turbocharger. AI in medicine is a new literacy. One is about efficiency. The other is about competence. Delaying the turbocharger carries administrative cost. Delaying the literacy carries curricular cost, graduates entering fields where AI competency is increasingly expected.

The classroom is the clinic.

In medical school, students see patients during training. The AI a student learns about in lecture is the same AI they encounter on rounds. The educational context and the professional context overlap almost completely.

The rest of higher education operates under a different structure. The classroom and the profession are separated by years, by disciplines, by physical buildings, and by entire industries. The supply chain student does not work in a warehouse during her sophomore year. The graphic design student does not bid on illustration commissions before graduation. The accounting student does not see the audit-trail AI her firm will deploy until she is hired.

That gap is exactly where the framework needs to expand. Two layers cover medicine. Four layers cover the rest of higher education.

The universal foundation.

AI Literacy

Do people understand how AI itself works?

Probability engines, bias, ethics, hallucination. Identical across every discipline, which is what makes it foundational rather than disciplinary. A nursing student and a graphic design student need the same conceptual understanding of how training data shapes output. If you read Chapter 2, you already possess more AI literacy than most: you understand that AI is a probability engine, not a calculator, and that understanding changes everything about how you interact with it.

AI Literacy has a structural property no previous form of literacy possessed. The subject of the literacy is the assessment instrument. A library database cannot quiz you on whether you understand how to use it. A calculator cannot test whether you understand what the numbers mean. But AI can hold a conversation that probes whether you understand how it works, and it can do this at scale, repeatedly, embedded in any course. The gateway-course-and-forget model fails for digital literacy and information literacy. AI Literacy does not have to repeat the mistake.

Institutional FLUFF.

AI for Edu

Is AI making the institution run better?

LMS automation, enrollment prediction, advising chatbots, degree audit, early-alert systems, AI-assisted scheduling. The infrastructure layer, the operational machinery that keeps the institution functioning. These tools exist only because the organization is a school, but the layer is optional in the sense that the institution functioned before AI and will function without it, just less efficiently.

From a Cognitive Triage perspective, this is institutional-scale FLUFF: important work whose value is capped. Buying a better advising chatbot does not make graduates more competent.

Institutional SPARK.

AI in Edu

Is AI making students think harder?

AI as a pedagogical instrument. AI as coach. AI-enhanced assignments. Progressive overload that uses AI to increase challenge rather than remove it. This is the layer where the Cognitive Gym operates.

When you design a Review Board assignment where students must defend their thesis against a hostile AI panel, you are working in this layer. When you use the Verification Protocol to shift assessment from generation to verification, you are working in this layer. This is where the cognitive muscle gets built.

Two warnings worth carrying forward. First, institutions that invest only in operational AI without addressing pedagogical AI are making the same mistake as the instructor who automates lesson planning but never redesigns an assignment. Second, the opposite trap exists too: "You cannot ask an adjunct teaching five sections to redesign their assignments for AI collaboration when they are still hand-entering grades into a spreadsheet." Layer 2 frees the time that Layer 3 requires.

The layer the 1980s instructor never named.

AI of the Profession

Do students know about the AI they will encounter in their careers?

The nursing student who must manage alert fatigue AI and ambient AI scribes. The paralegal student who must know how AI contract review changes the scope of their role. The accounting student who must verify AI audit trails. The CIS student who must document AI agent orchestration frameworks. The graphic design student who must reckon with AI as a competitor, not a tool.

This layer is governed by advisory boards, accreditors, and industry partners, the people who know what the profession looks like now, not what it looked like when the instructor left it. It moves at the speed of the market, outpacing accreditors, licensing boards, and curriculum cycles.

"Why 'Profession' and not 'Workforce' or 'Industry'? Because in fine art, AI is not a workforce tool. It is a competitor. An MFA student does not need to learn how to wield AI in a future job the way a nursing student does. The MFA student needs to understand that AI is competing for illustration commissions. 'Profession' is neutral about whether AI is tool or threat. It covers both the nursing student and the art student without forcing either into the other's frame."

One question places any AI activity into its layer.

Who evaluates whether it worked?

| AI Literacy | The institution evaluates it, or, if we are honest, it is discovered through failure in the other layers. |

| AI for Edu | Administrators evaluate it, watching efficiency metrics and peer benchmarks. |

| AI in Edu | Instructors evaluate it. Cognitive Gym rubrics, VINE assessments, AI Audit protocols all live here. |

| AI of the Profession | The marketplace evaluates it. Employers, clients, competitors. The feedback arrives after graduation, when it is too late for the institution to intervene. |

That gradient from tight feedback loops to slow ones explains why institutions over-invest in "For" — fast dashboard metrics — and under-invest in "Profession" — no signal until the student is gone.

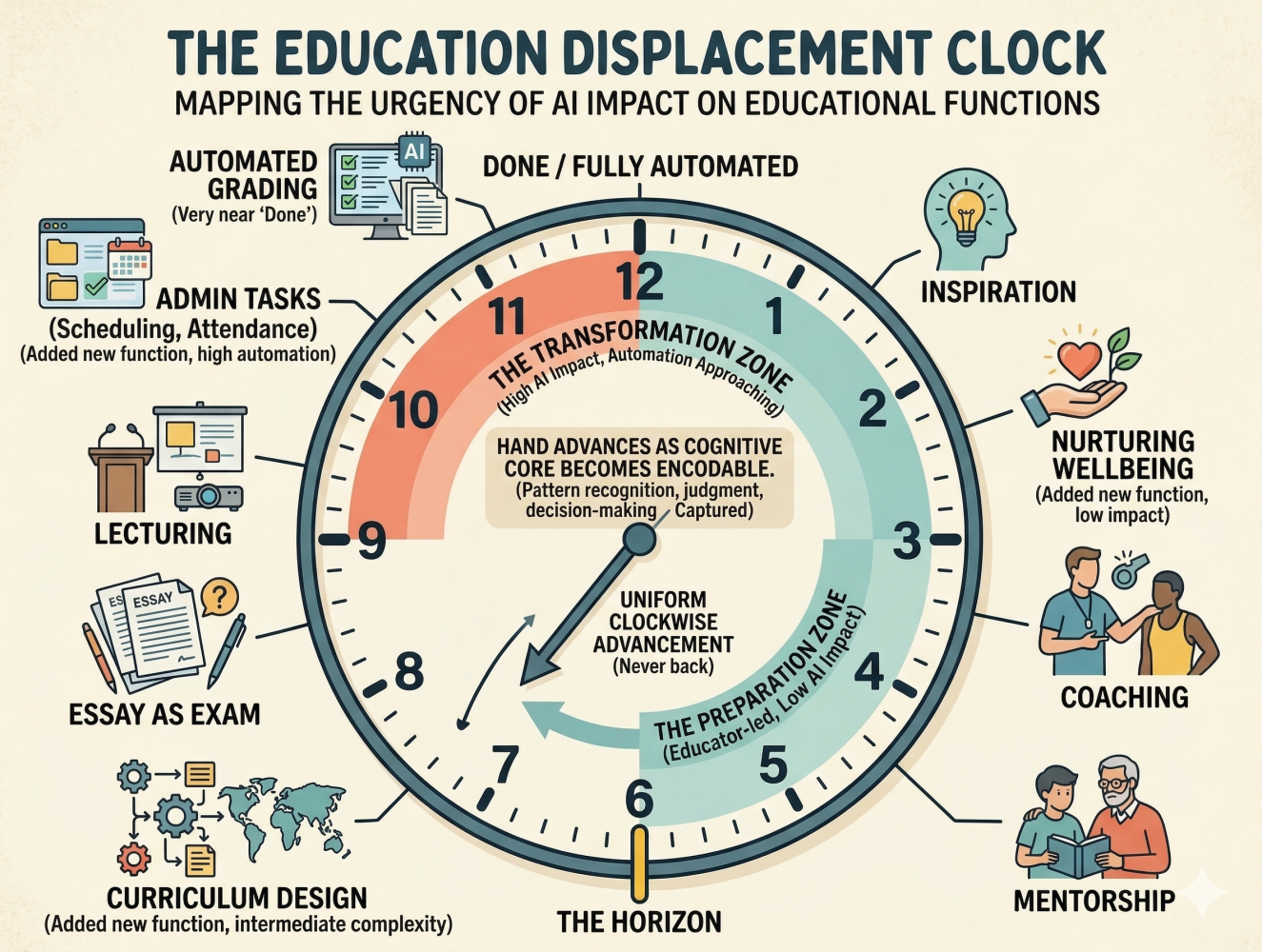

The four layers tell you what to govern. The clock tells you how urgently.

Picture an analog clock, but read it differently. The hand sweeps clockwise from safe through horizon to done.

Most functions that matter to educators sit somewhere between 1 and 6, approaching the horizon, not past it. That makes the clock a planning tool, not an alarm. EHR documentation and routine diagnostic imaging reads are approaching 12. Clinical decision support, AI-assisted tutoring, and adaptive assessment design sit near 6. The hand sweeps clockwise. Compare clocks across fields and the message for students becomes career guidance.

Four layers. Four questions. Four governance structures.

Right now, the CIO is buying tools. The faculty senate is debating assignments. Career services is advising students about a job market being reshaped by AI. The general education committee is wondering whether every student needs to understand how LLMs work. They are all talking about "AI in education." They are rarely talking to each other. The vocabulary is simple. The institutional discipline to use it, to stop conflating infrastructure decisions with curricular ones, to stop pretending that buying a chatbot is the same as teaching a student, that is the hard part.

"The strategy conversation does not start in the boardroom. It starts the next time a student asks, 'can I use AI for this?' and you know exactly how to answer, not yes or no, but which layer applies."

— Section 5.4, A Shared VocabularyThe educator who can hold all four questions at once does not have an AI problem. They have an AI practice. That is not strategy. That is teaching. The boardroom will catch up. That is the strategy. It was always yours to lead.

Read Chapter 5 in full · free OER

Chapters 1 through 4 of The Learn-It-All Educator are openly available under CC BY 4.0 on Zenodo. Chapter 5 is part of the complete print edition. The OER record is the canonical, citable source.

Bring this to your committee

The next time AI policy comes up in faculty senate, curriculum committee, or a CIO meeting, ask which layer the conversation is about. If the answer is "all of them," the conversation will not lead to a decision. If the answer names one layer cleanly, the right people are in the room. If it names a layer the room cannot govern, you have located the gap.

Take this activity with you

Print the entire twelve-step walk as a handout for your department, faculty learning community, curriculum committee, or yourself. The print version cascades all twelve steps onto a clean letter-size document.